Authors: Cheng Wan, Runkao Tao, Zheng Du, Yang Katie Zhao, Yingyan Celine Lin

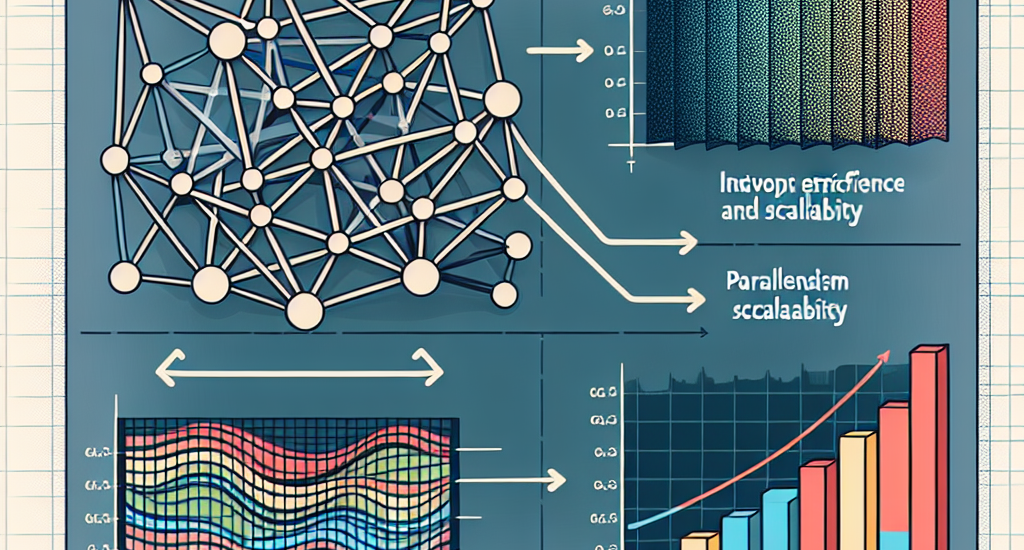

Abstract: Graph convolutional networks (GCNs) have demonstrated superiority in

graph-based learning tasks. However, training GCNs on full graphs is

particularly challenging, due to the following two challenges: (1) the

associated feature tensors can easily explode the memory and block the

communication bandwidth of modern accelerators, and (2) the computation

workflow in training GCNs alternates between sparse and dense matrix

operations, complicating the efficient utilization of computational resources.

Existing solutions for scalable distributed full-graph GCN training mostly

adopt partition parallelism, which is unsatisfactory as they only partially

address the first challenge while incurring scaled-out communication volume. To

this end, we propose MixGCN aiming to simultaneously address both the

aforementioned challenges towards GCN training. To tackle the first challenge,

MixGCN integrates mixture of parallelism. Both theoretical and empirical

analysis verify its constant communication volumes and enhanced balanced

workload; For handling the second challenge, we consider mixture of

accelerators (i.e., sparse and dense accelerators) with a dedicated accelerator

for GCN training and a fine-grain pipeline. Extensive experiments show that

MixGCN achieves boosted training efficiency and scalability.

Source: http://arxiv.org/abs/2501.01951v1