Authors: Shivam Duggal, Phillip Isola, Antonio Torralba, William T. Freeman

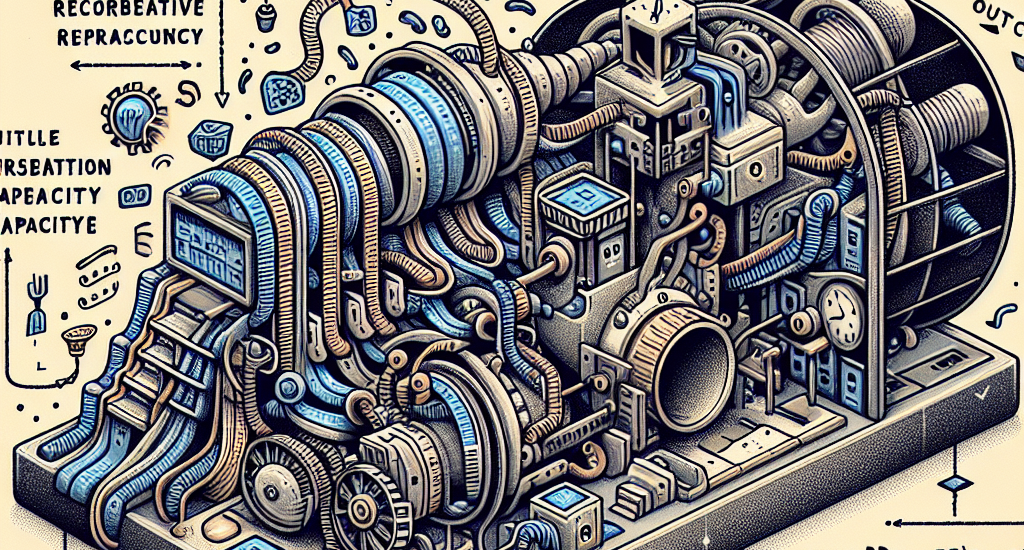

Abstract: Current vision systems typically assign fixed-length representations to

images, regardless of the information content. This contrasts with human

intelligence – and even large language models – which allocate varying

representational capacities based on entropy, context and familiarity. Inspired

by this, we propose an approach to learn variable-length token representations

for 2D images. Our encoder-decoder architecture recursively processes 2D image

tokens, distilling them into 1D latent tokens over multiple iterations of

recurrent rollouts. Each iteration refines the 2D tokens, updates the existing

1D latent tokens, and adaptively increases representational capacity by adding

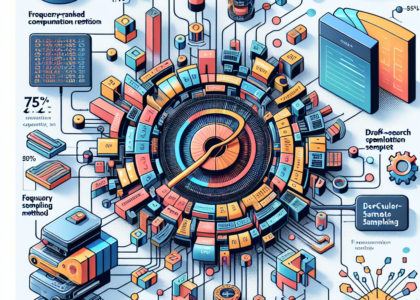

new tokens. This enables compression of images into a variable number of

tokens, ranging from 32 to 256. We validate our tokenizer using reconstruction

loss and FID metrics, demonstrating that token count aligns with image entropy,

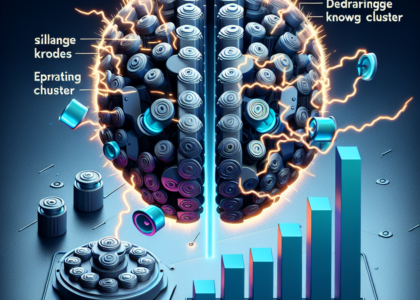

familiarity and downstream task requirements. Recurrent token processing with

increasing representational capacity in each iteration shows signs of token

specialization, revealing potential for object / part discovery.

Source: http://arxiv.org/abs/2411.02393v1